Real Estate Investment AI

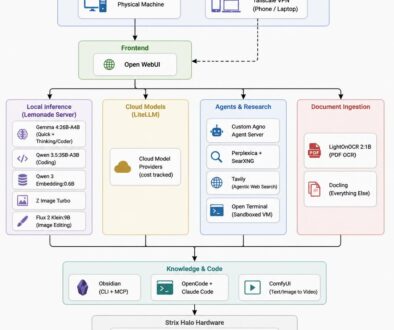

One of my most intricate projects (recently shut down) was an artificial intelligence program that identified potential real estate investment opportunities. At a high level, it combed through a particular MLS each day to look for properties that might be under-valued enough to warrant a flip. The parameters used by the application to identify potential investment properties is still proprietary, but I am able to share the technology I built to make it happen every day without any manual intervention needed.

Gathering Data with Selenium

Gathering Data with Selenium

Most MLS do not provide an API or method of exporting data, so the app needed to actually browse the website itself. Selenium is a package for Python that allows the developer to automate web browsing. The realtor showed me how he would manually search for investment properties, and I translated that into an automated web browsing session that captured all of the necessary information. This involved multiple MLS searches, so I had to combine multiple data sets from the MLS for further processing.

Emailing with Mailchimp

Every day, the application sent out a summary email to investors or realtors who had signed up for the service. Rather than trying to create my own email delivery system that could handle all the quirks that come along for the ride, I decided to use Mailchimp as the email delivery service. This provided several benefits. First, it made it pretty easy to manage payments and signups on the user-facing website. I didn’t have to reinvent the wheel with a custom plugin, because Mailchimp already has a plethora of plugins available.

Second, it allowed me to offload the ability to deliver a large amount of emails. With just a few API calls to Mailchimp, my application could set it and forget it. Delivering a single email from an application is easy. Delivering a lot of emails takes way more thought and infrastructure than you might think. I’m happy to let Mailchimp handle that for me.

Automating Everything with AWS

Since the application needed to run every single day, I didn’t want to have to trigger anything manually (and neither did the business owner). But I’m also a frugal type, so I didn’t want to waste the business owner’s money. I eventually landed on an AWS EC2 instance that automatically started itself at a particular time of day, and it suspended itself when its task was complete. The instance was setup to run the program whenever it resumed from sleep UNLESS a “debugging” key was set inside AWS. This allowed me to fire up the instance and check any local logs or issues without running the application itself.

Remote  Error Reporting with Sentry

Error Reporting with Sentry

When your application runs in the Cloud, how do you know when something goes wrong? Sentry allows me to log very detailed errors and receive an email notification when they happen. It gathers breadcrumbs along the way, and it only logs them if an error actually happens. So, for instance, I can add extra information to help me track down where in the program flow the error occurred, and that information gets discarded unless something goes wrong. Very slick.

I added an extra layer to this error reporting as well. Since the errors were mostly accounting for changes on the MLS website, I needed to see what the web browser looked like when the error occurred. But for a web browser running automatically in the Cloud, there isn’t a screen to look at. So I used a virtual screen whenever there was an error. In essence, I rendered the current website view to an image and then had the application email me that screenshot for extra information on the error. I can’t tell you how many times that screenshot turned a tricky error trail into an easy fix.

Summary

This was a fun project since it required me to coordinate so many different parts seamlessly. It was mighty satisfying when those daily investment summaries started going out every day without me lifting a finger. And the remote error reporting mechanism allowed me to fix any issues very quickly. It also gave me an excuse to learn a lot more about AWS.